Artificial intelligence tools have become a standard part of everyday business life. From drafting emails to summarizing reports to answering customer questions, AI is showing up in nearly every workflow. And for small and mid-sized businesses, the appeal is clear: more efficiency, less busywork, faster results.

But here’s what too many business owners are skipping: the risks.

AI risks for business are real, growing, and often invisible until something goes wrong. Whether it’s an employee accidentally sharing confidential client data with a chatbot, an AI generating a fabricated statistic that ends up in a proposal, or a compliance violation that triggers a regulatory audit – the consequences can be serious.

This article breaks down what you actually need to know before your team starts using AI freely, and what you can do right now to protect your business.

What Happens to the Data You Enter Into AI Tools?

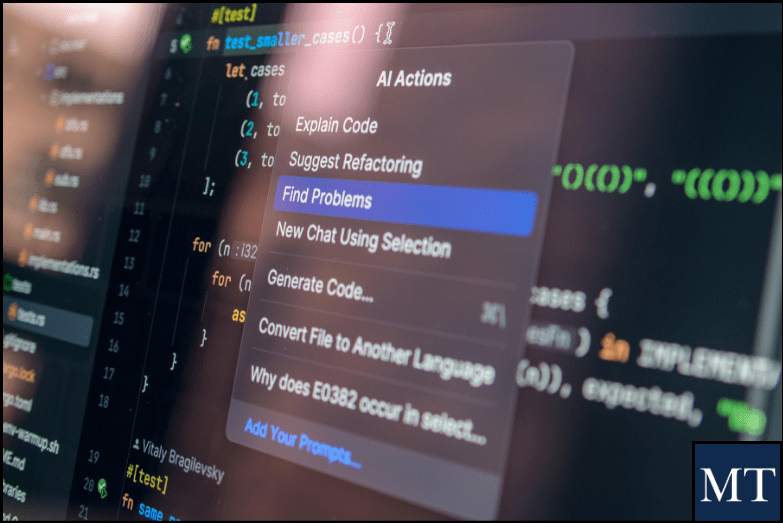

Most people type questions into ChatGPT, Copilot, or another AI tool without thinking twice about what happens to that information afterward. The reality might surprise you.

Many popular AI platforms – especially free or consumer-grade versions – use your inputs to improve and train their models. That means client notes, financial details, or internal strategy you paste into a prompt could become part of a dataset. Even more sobering: 91% of organizations acknowledge they need to do more to reassure customers about how their data is used with generative AI. (Source: Cisco Data Privacy Benchmark 2025)

Enterprise versions of tools like Microsoft 365 Copilot with proper data governance enabled offer stronger protections – but default, out-of-the-box AI tools often do not.

Types of information that should never be entered into a public AI tool:

- Client names, contact details, or personal identifiers

- Medical or health information (PHI)

- Financial records, tax data, or banking details

- Proprietary business strategies or trade secrets

- Employee records or HR-related information

- Legal documents or attorney-client communications

AI Hallucinations: When Confident Answers Are Wrong

One of the most misunderstood AI risks for business is what’s known as a hallucination. An AI hallucination occurs when a large language model generates output that sounds accurate and authoritative – but is factually incorrect, fabricated, or entirely fictional. The AI doesn’t flag uncertainty. It presents the information as if it were fact.

Real-world examples include a chatbot at Air Canada erroneously promising a discount the airline was then forced to honor, and attorneys being sanctioned after submitting AI-generated legal briefs citing fictional case law. Even leading AI models carry documented hallucination rates of 3–5%, and some newer models hallucinate more frequently – not less. (Source: Descript / IntuitionLabs AI Research)

If your team uses AI to draft proposals, research vendors, summarize contracts, or generate reports – and those outputs are not verified – you may be basing real business decisions on fictional information. That’s not a technology problem. That’s a liability problem.

Compliance Considerations: HIPAA, Financial Data, and More

If your business operates in healthcare, finance, legal, insurance, or any other regulated industry – or if you simply handle customer data – AI usage isn’t just a tech question. It’s a compliance question.

67% of healthcare organizations report being unprepared for stricter AI-related HIPAA security standards. Third-party vendors – including IT providers and cloud services – are now under increased scrutiny too. (Source: Sprypt / Accent Consulting HIPAA 2025)

Violating HIPAA through improper AI use can result in fines ranging from $100 to $50,000 per violation. In financial services, using AI to process client data without proper safeguards can trigger SEC, FINRA, or state-level data privacy regulations. The key takeaway: always verify whether your AI tools are compliant with the regulatory requirements that apply to your industry before deploying them.

The Biggest AI Risks Businesses Overlook

Based on current industry research, here are the AI risks for business that most frequently catch organizations off guard:

- Shadow AI Usage — Employees using personal AI accounts, not company-approved tools, to handle work tasks, with zero visibility or control.

- No Access Controls — According to IBM’s 2025 Cost of a Data Breach Report, 97% of organizations that experienced AI-related breaches had no proper AI access controls in place.

- Over-Trust in AI Output — Treating AI-generated content as a finished product rather than a first draft that requires human review.

- No AI Usage Policy — Fewer than 1 in 10 organizations have AI risk and compliance reviews integrated into their workflows. (Source: Trustmarque AI Governance Report 2025)

- Data Leakage via Prompts — Sensitive information entered into an AI tool may be retained and potentially surfaced in other users’ queries if the platform lacks enterprise-grade data controls.

- Regulatory Blind spots — Many small businesses don’t realize that using AI in hiring, customer service, or data processing may already fall under existing laws — or soon will.

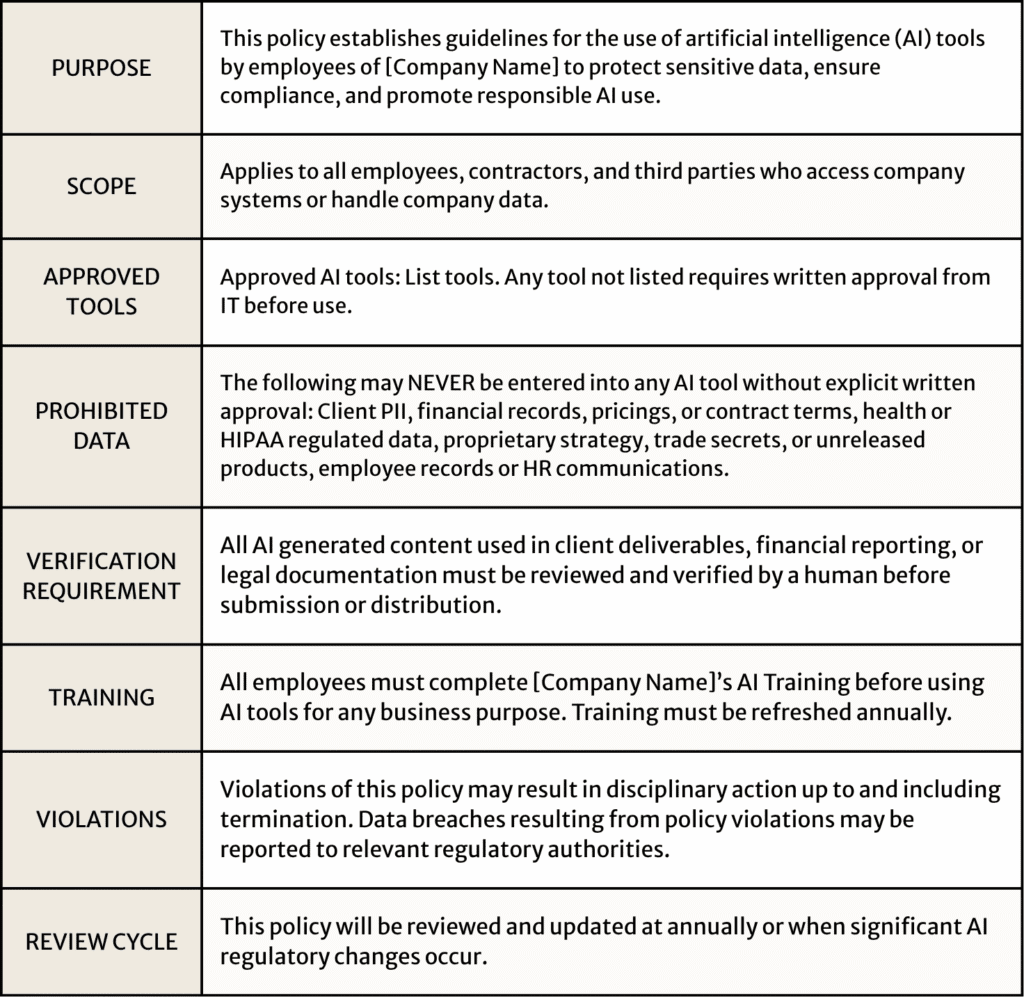

How to Create an Internal AI Usage Policy

You don’t need a legal team or a large IT department to put guardrails around AI at your company. Here’s a straightforward process to get started:

Frequently Asked Questions About AI Risks for Business

Q: Is it safe to use ChatGPT for work tasks?

A: It depends on the task and which version you’re using. Consumer versions of ChatGPT may use your inputs to improve the model. Business or enterprise plans typically offer stronger data privacy protections. Always check the terms of service and avoid entering any confidential, regulated, or client-specific information.

Q: Does using AI mean we’re violating HIPAA?

A: Not automatically — but it can. If your staff enters any protected health information (PHI) into an AI tool that isn’t covered by a Business Associate Agreement (BAA), that could be a HIPAA violation. Healthcare-adjacent businesses need to be especially careful.

Q: What’s the difference between an AI hallucination and a regular mistake?

A: A regular mistake comes from incorrect data or human error. An AI hallucination is when the model confidently generates information that doesn’t exist – a fake statistic, a fictional case, a made-up citation. The danger is that it sounds completely credible, making it easy to miss without careful review.

Q: Do we need a formal AI usage policy if we’re a small business?

A: Yes. In fact, smaller businesses are often more vulnerable because they lack the compliance infrastructure that larger organizations have. A simple, one-page policy that defines approved tools, prohibited data types, and review expectations goes a long way.

Q: How do I know if an AI tool is enterprise-grade and safe?

A: Look for tools that offer a signed Data Processing Agreement (DPA), clearly state they don’t use your inputs for model training, support single sign-on (SSO) and role-based access controls, and provide audit logs. If a vendor can’t answer these questions, that’s a red flag.

Ready to Put the Right Guardrails in Place?

AI is a powerful tool — but only when your business is set up to use it safely. At Macatawa Technologies, we help West Michigan businesses navigate exactly this: from evaluating your current AI exposure to building practical usage policies your team will actually follow.

If you missed our webinar — AI Security and Risk: How Businesses Use AI Without Putting Data at Risk – you can catch the recording on our YouTube here – The Risks AI Brings to Your Business

Have more questions about this topic? We’re here to help. Contact us for answers, guidance, or support.